Nov 28, 2006 - Ok, I'm a Linux software raid veteran and I have the scars to prove it. A quick check of the event counters shows that only /dev/sdb is stale. The status of the new drive became 'sync', the array status remained inactive, and no resync took place. The UPS kicked in but died (whilst at lunch 'Ahem!'

The Need

I recently had the need to convert my home server setup from single disk to raid 1 without loosing data or reinstall the system. I found various articles around for this but mostly for old version of redhat/centos, debian/ubuntu and older initramfs/grub version. For personal reference and to thank all the people that share information i'm writing this article.

Main reason for this headache: have a more safe place to store some important data and, since I use mostly really cheap or 'cost zero' hardware, a safer place for my CentOS 7 installation.

I'm no expert, follow this instruction at your own risk. I'm not responsible for data loss, or any damage that might occur following this instructions. It just worked for me.

Backup all your data

Remember that raid 1 is not a backup, always do your backups!!!

Comments/Discuss

This is a really small wiki for personal use, no talk/discuss or user registration is allowed.

fell free to contact me for any info, comments, personal experience or correction to this page

'cmatthew' [dot] 'net' [at] 'gmail' [dot] 'com'

- 1start

- 2new disk

- 3transfer data

- 4grub2 and initramfs

- 5reboot

- 6monitoring

start

current setup

1x segate barracuda 500gb as /dev/sda with 3 partitions.

Current partitions are XFS not using LVM

what we need

I'm adding a second identical disk /dev/sdb for raid 1 setup. The raid will be a linux software raid managed by 'mdadm' be sure to have package installed.

Be also sure to have a lot of patience, junk food and caffeine as usual :)

backup

A full working backup of everything.

new disk

plug in new disk

/dev/sdb pretty obvious.

partitions

Create identical partition scheme as curent disk /dev/sda

Check

Convert new disk /dev/sdb partitions to 'Linux raid autodetect'

Check

create degraded raid 1

Create for all partition on new disk /dev/sdb

Check

create filesystem raid 1

Create for all newly created raid 1 partition

transfer data

mount

Mount both / and /boot

copy existing data

I'm no rsync expert this did the job for me.

grub2 and initramfs

mount system information

Mount both / and /boot (should be already mounted)

System information

chroot

Jail! No harm to current system.

fstab

Edit fstab with new dirve UUID information

create mdadm configuration

initramfs

Backup current and create new initramfs

grub parameters

Add some default parameters to grub

make new grub config

install grub

Install grub on new disk /dev/sdb

reboot

At this point you can reboot the system choosing new disk /dev/sdb from bios, or plug old disk /dev/sda out. if all worked out system will boot, check mount points and raid status

Or if didn't work out.. well we didn't touch any data or anything else on original disk so read more, start over.. don't complaint u'r use to it :)

add old disk to array

Now we ca add old disk /dev/sda to the array. Change partition type to 'Linux raid autodetect'.

Add disk to raid 1 array

Check rebuild

Reinstall grub on /dev/sda

monitoring

add to /etc/mdadm.conf

raid-check

The status of raid device will be checked once a week by default

to change parameters check /etc/sysconfig/raid-check

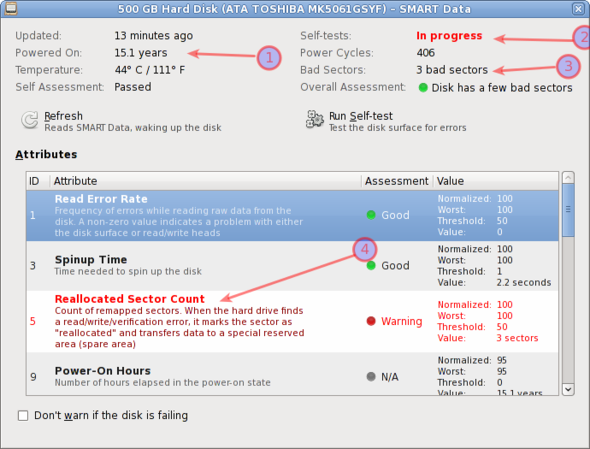

smart

Use smart features if available on hard drives

This is my personal configuration: comment all lines in /etc/smartmontools/smartd.conf and add

#EoF profit! ;P